KentBrockman

Members-

Posts

74 -

Joined

-

Last visited

Converted

-

Gender

Male

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

KentBrockman's Achievements

Rookie (2/14)

0

Reputation

-

Thank You SlrG, I have it running now and upload bandwidth is being throttled as I was hoping. I can't quite make sense of the set rate vs the actual speeds I am seeing. I have tried 500KB/s and 1000KB/s for overall up/down rates but my router is telling me actual is between 5-10Mbps. Either way I am happy, I was just wondering if anyone knew how the set rates relate to the real world numbers. Thanks again

-

I have been using this plugin for a while and it has been great. Lately I am having trouble with people hammering my server and using all of my upload bandwidth. I see there is a module called mod_shaper that would allow me to limit the bandwith a user is using. http://www.castaglia.org/proftpd/modules/mod_shaper.html Can anyone please help me get pointed in the right direction to get this implemented? Or is this something that has to be added to the plugin? Thanks

-

Hi Guys, I need your help. I have been struggling with this issue for almost a week now. It all started when my parity drive went missing and I thought it needed replacing. I RMAd the drive but ever since I have had issues with replacing the parity drive, preclearing new drives etc. I have tried different drives, different cables and different sata ports with no success. The log shows something like this: print_req_error: I/O error, dev sdd, sector 2951164368 I have attached diagnostics, hopefully someone can help me. Edit: Forgot to mention I am on version 6.8.0 after downgrading from 6.8.2 which I thought could have been the problem Thanks tower-diagnostics-20200207-1914.zip

-

It looks like it might be the controller. My parity drive just red balled and is showing similar errors. An extended smart test seems to indicate the drive is ok. I had not heard of issues with the SAS2LP but it looks like the consensus is an LSI based card is the way to go. I am looking at this one: http://www.ebay.ca/itm/291641245650 Any last advice before I make the jump to the new controller? tower-diagnostics-20170819-1044.zip

-

Hi All, Running Unraid 6.3.5 and I have noticed the following errors when I start a parity check: Aug 14 20:15:42 Tower kernel: sas: sas_ata_task_done: SAS error 8a (Errors) Aug 14 20:15:42 Tower kernel: sas: Enter sas_scsi_recover_host busy: 1 failed: 1 (Drive related) Aug 14 20:15:42 Tower kernel: sas: ata10: end_device-1:3: cmd error handler (Errors) Aug 14 20:15:42 Tower kernel: sas: ata7: end_device-1:0: dev error handler (Drive related) Aug 14 20:15:42 Tower kernel: sas: ata8: end_device-1:1: dev error handler (Drive related) Aug 14 20:15:42 Tower kernel: sas: ata9: end_device-1:2: dev error handler (Drive related) Aug 14 20:15:42 Tower kernel: sas: ata10: end_device-1:3: dev error handler (Drive related) Aug 14 20:15:42 Tower kernel: ata10.00: exception Emask 0x0 SAct 0x0 SErr 0x0 action 0x6 (Errors) Aug 14 20:15:42 Tower kernel: ata10.00: cmd 25/00:00:68:e7:04/00:04:00:00:00/e0 tag 28 dma 524288 in (Drive related) Aug 14 20:15:42 Tower kernel: res 01/04:00:f7:b1:48/00:00:00:00:00/e0 Emask 0x12 (ATA bus error) (Errors) Aug 14 20:15:42 Tower kernel: ata10.00: status: { ERR } (Drive related) Aug 14 20:15:42 Tower kernel: ata10.00: error: { ABRT } (Errors) How can I tell what drive or controller is the cause? I have attached diagnostics. Any help would be appreciated. Cheers tower-diagnostics-20170814-2020.zip

-

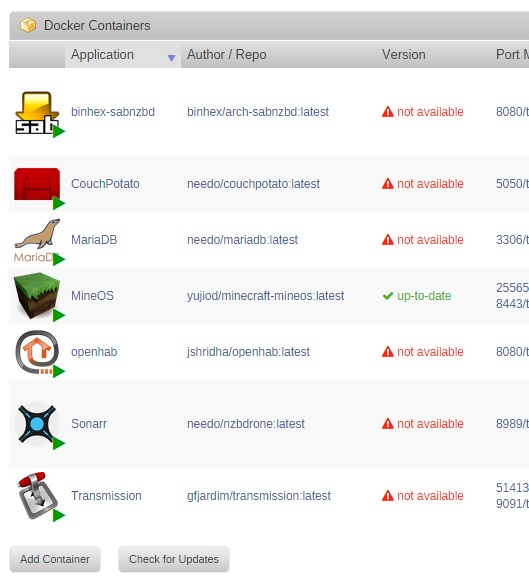

I just upgraded to 6.1 from 6.0.1 and it all seemed to go just fine. I just have one strange problem with some of my dockers. The version column is shown as "Not Available", but they all seems to be working just fine. Any idea what this could be?

-

constant buffering with kodi after upgrade to 6.0.1

KentBrockman replied to raven42's topic in General Support

I have also noticed this on my Openelec kodi box. My buffering is not nearly as bad as you are describing but definitely worse than on 5.6.1 -

Parity check three times slower than sync or rebuild. Is it normal?

KentBrockman replied to pkn's topic in General Support

johnnie Thanks for the suggestion. I re-arranged my drives like this: Parity - MB Slot 1 Disk 1 - MB Slot 2 Disk 2 - MB Slot 3 Disk 3 - MB Slot 4 Disk 4 - SAS2LP Port 1 Disk 5 - SAS2LP Port 2 Disk 6 - SAS2LP Port 3 Disk 7 - SAS2LP Port 4 Disk 8 - SAS2LP Port 5 cache - SAS2LP Port 6 Parity check speed did improve a little bit. I averaged 52 Mb/s last run. It started slow (25 30 MB/s) but got faster as I passed about 50%. -

Parity check three times slower than sync or rebuild. Is it normal?

KentBrockman replied to pkn's topic in General Support

Here is an update on my situation. I swapped out the processor for the E7500 plus 4GB of Ram and my Parity check speed did improve to around 25-29 Mb/s CPU utilization is sitting at 60%. Definitely an improvement but still much slower than it was on V5. I had a failed disk yesterday and the rebuild ran at close to 100 Mb/s. Looks like there is an issue with 6.0.1 and the SAS2LP controller. -

Parity check three times slower than sync or rebuild. Is it normal?

KentBrockman replied to pkn's topic in General Support

johnnie.black, I thought this issue is directly related to the SAS2LP controller? TSM confirmed that his parity checks were fast using 6.0 b14 and slow on later versions. Also the quote from Squid: "Just a FYI, the parity check speed drop which I incurred when LT switched to a preemptible kernel in 6.0b15 has now returned back to its faster speed with 6.1rc-1" would indicate there is a known issue. I have a spare Core2Duo E7500 that I was planning on swapping in anyway so I will see if the CPU is really the bottleneck. -

Parity check three times slower than sync or rebuild. Is it normal?

KentBrockman replied to pkn's topic in General Support

I just upgraded to 6.0.1 from V5 and discovered a huge drop in parity check speed. I also have the SAS2LP card but with an Intel celeron processor. I can barely sustain 11MB/s during parity check and my CPU is pinned. My estimated time to finish is around 3 days. I must have missed this potential problem when I was researching the upgrade. Hopefully this can be solved since it appears to have been introduced during the Beta phase.